Constructing a production-grade voice AI agent is without doubt one of the hardest engineering challenges in utilized machine studying in the present day. It isn’t nearly transcription accuracy. You want a system that may maintain context throughout a five-minute dialog, invoke exterior APIs mid-call with out a clumsy pause, gracefully get well when a caller corrects themselves, and do all of this reliably when the audio is degraded by background noise, a heavy accent, or a dropped phrase. Most present programs deal with one or two of these necessities. xAI’s newly launched grok-voice-think-fast-1.0 is making a severe declare to deal with all of them — and the benchmark numbers again it up.

Accessible through the xAI API, grok-voice-think-fast-1.0 is the xAI’s new flagship voice mannequin. It’s purpose-built for complicated, ambiguous, multi-step workflows throughout buyer assist, gross sales, and enterprise purposes, and it’s already deployed at scale powering Starlink’s dwell cellphone operations.

What Makes a Voice Agent Full-Duplex?

Earlier than unpacking the benchmark outcomes, it’s value understanding what sort of mannequin grok-voice-think-fast-1.0 is. It’s evaluated on the (Tau) τ-voice Bench as a full-duplex voice agent. The system processes incoming speech and generates responses concurrently, somewhat than ready for the speaker to cease earlier than it begins considering. That is how people talk in actual conversations. It is usually why dealing with interruptions is a genuinely laborious technical drawback: the mannequin should resolve in actual time whether or not a mid-sentence utterance is a correction, a clarification, or only a filler phrase, and regulate its conduct accordingly.

The τ-voice Bench evaluates brokers particularly beneath these practical circumstances: noise, accents, interruptions, and pure turn-taking, making it a extra related measure for manufacturing deployments than conventional clean-audio ASR benchmarks.

The Numbers: A Important Lead

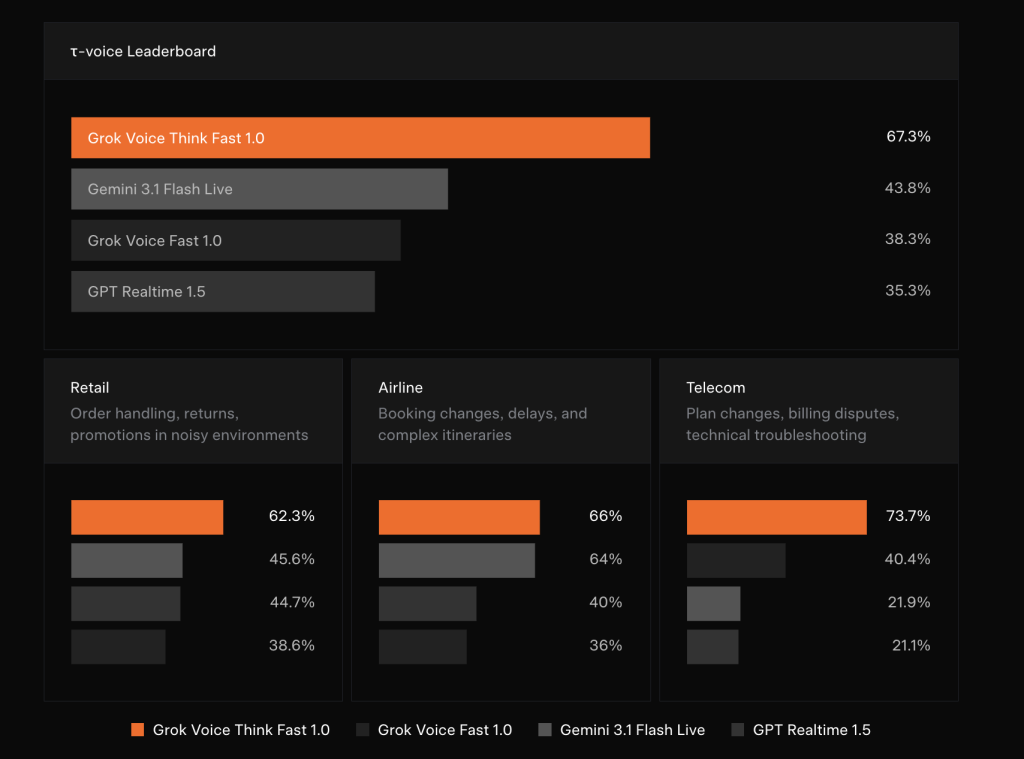

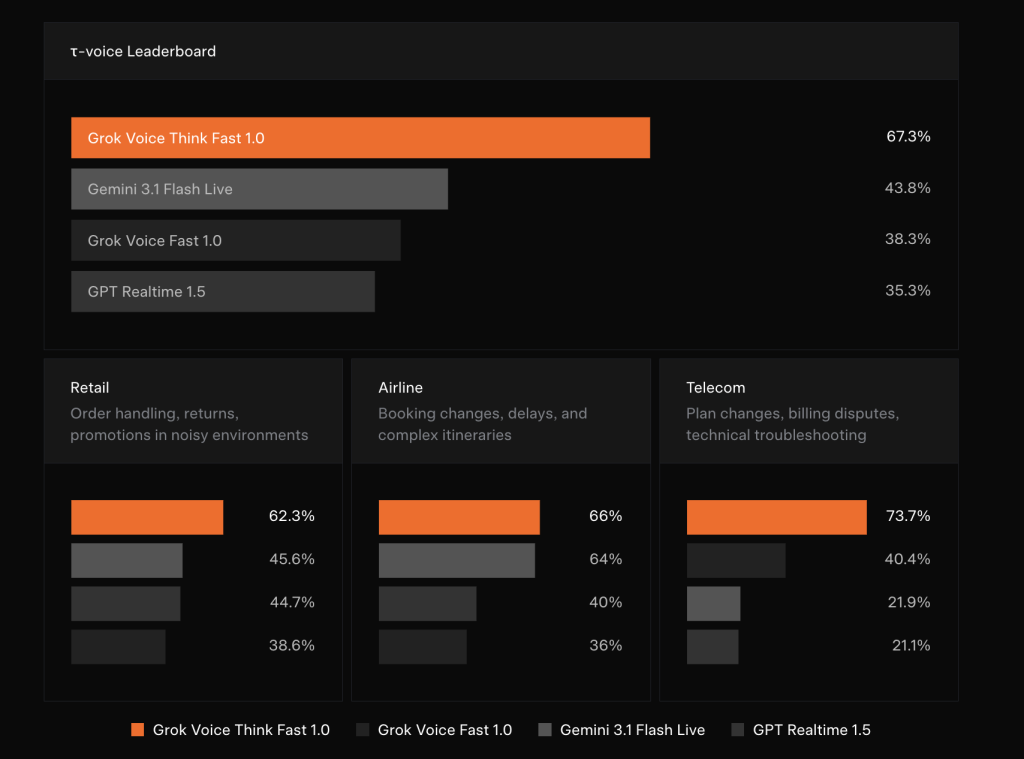

The benchmark outcomes xAI revealed are placing in how giant the gaps are. On the τ-voice Bench total leaderboard, grok-voice-think-fast-1.0 scores 67.3%, in comparison with 43.8% for Gemini 3.1 Flash Reside, 38.3% for Grok Voice Quick 1.0 (xAI’s personal earlier mannequin), and 35.3% for GPT Realtime 1.5.

Breaking that down by vertical tells an excellent clearer story:

In Retail — masking order dealing with, returns, and promotions in noisy environments — grok-voice-think-fast-1.0 scores 62.3%, adopted by Grok Voice Quick 1.0 at 45.6%, Gemini 3.1 Flash Reside at 44.7%, and GPT Realtime 1.5 at 38.6%.

In Airline — reserving adjustments, delays, and sophisticated itineraries — the scores are 66% for Grok Voice Suppose Quick 1.0, 64% for Grok Voice Quick 1.0, 40% for Gemini 3.1 Flash Reside, and 36% for GPT Realtime 1.5.

Probably the most dramatic hole seems in Telecom: plan adjustments, billing disputes, and technical troubleshooting — the place grok-voice-think-fast-1.0 achieves 73.7%, whereas Grok Voice Quick 1.0 scores 40.4%, Gemini 3.1 Flash Reside 21.9%, and GPT Realtime 1.5 21.1%. A 33-percentage-point lead over the subsequent competitor in a single vertical just isn’t a marginal enchancment. That’s an architectural benefit.

Actual-Time Reasoning With Zero Added Latency

One of the technically important design selections on this mannequin is how reasoning is dealt with. grok-voice-think-fast-1.0 performs reasoning within the background, considering via difficult queries and workflows in actual time with no impression on response latency. For AI groups, that is the tough half to construct: reasoning fashions historically enhance response time as a result of they generate intermediate ‘considering’ tokens earlier than producing a solution. Hiding that computation from the conversational latency finances, whereas nonetheless benefiting from it, requires cautious structure work.

The sensible payoff is accuracy with out sluggishness. xAI workforce demonstrates this with a consultant edge case: when requested “Which months of the 12 months are spelled with the letter X?”, grok-voice-think-fast-1.0 appropriately responds that no month accommodates the letter X. Alternatively, the competing fashions confidently and incorrectly answered “February.” This class of error, the place a mannequin produces a plausible-sounding however mistaken reply with excessive confidence, is especially damaging in voice interfaces as a result of customers haven’t any textual content output to cross-check.

Exact Knowledge Entry and Learn-Again

A core workflow functionality of grok-voice-think-fast-1.0 is structured information seize and read-back. The mannequin can seamlessly gather e mail addresses, bodily road addresses, cellphone numbers, full names, account numbers, and different structured information, even when data is spoken shortly or with a powerful accent. It gracefully handles speech disfluencies and accepts pure corrections as a human would, then reads again the confirmed information to the person.

xAI illustrates this with a concrete instance. A caller says: “Yep, it’s 1410, uh wait, 1450 Web page Mill Road. Really no sorry, that’s Web page Mill Highway.” The mannequin processes the spoken corrections in actual time, invokes a search_address instrument with the corrected parameter "1450 Web page Mill Rd", and reads again the normalized handle for person affirmation. Knowledge groups who has frolicked constructing post-call cleanup pipelines to extract structured fields from messy transcripts, this native capture-and-read-back functionality represents a significant discount in downstream processing complexity.

The mannequin has been battle-tested within the hardest real-world circumstances: telephony audio, background noise, heavy accents, and frequent interruptions. It natively helps 25+ languages, making it best for international deployments throughout use circumstances together with buyer assist, cellphone gross sales, appointment reserving, and restaurant reservations.

The Starlink Deployment: Manufacturing at Scale

Probably the most compelling validation of grok-voice-think-fast-1.0 just isn’t the benchmark alone nevertheless it’s dwell deployment. Grok Voice powers the total cellphone gross sales and buyer assist operation for Starlink at +1 (888) GO STARLINK. The numbers xAI discloses from this deployment are operationally important: a 20% gross sales conversion charge (that means one in 5 callers making a gross sales inquiry purchases Starlink service whereas on the cellphone with Grok), a 70% autonomous decision charge for buyer assist inquiries with no human within the loop, and a single agent working throughout 28 distinct instruments spanning a whole bunch of assist and gross sales workflows.

Key Takeaways

- grok-voice-think-fast-1.0 leads the τ-voice Bench with a 67.3% rating, outperforming Gemini 3.1 Flash Reside (43.8%), Grok Voice Quick 1.0 (38.3%), and GPT Realtime 1.5 (35.3%).

- The mannequin performs background reasoning with zero added latency, permitting it to assume via complicated, multi-step workflows in actual time with out slowing down conversational responses.

- Exact information entry and read-back is a local functionality, enabling the mannequin to seize and ensure structured information like names, addresses, cellphone numbers, and account numbers even when spoken shortly, with an accent, or with mid-sentence corrections.

- The mannequin helps 25+ languages and high-volume instrument calling, making it deployable throughout international enterprise use circumstances together with buyer assist, cellphone gross sales, appointment reserving, and restaurant reservations.

- Starlink’s dwell deployment proves manufacturing readiness at scale: a single Grok Voice agent operates throughout 28 instruments and a whole bunch of workflows, reaching a 20% gross sales conversion charge and autonomously resolving 70% of buyer assist inquiries with no human within the loop.

Try the Documentation and Official Launch. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 130k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be a part of us on telegram as effectively.

Must accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so on.? Join with us