Picture by Creator

# Introduction

When making use of for a job at Meta (previously Fb), Apple, Amazon, Netflix, or Alphabet (Google) — collectively generally known as FAANG — interviews hardly ever take a look at whether or not you possibly can recite textbook definitions. As an alternative, interviewers need to see whether or not you analyze knowledge critically and whether or not you’ll determine a nasty evaluation earlier than it ships to manufacturing. Statistical traps are some of the dependable methods to check that.

These pitfalls replicate the sorts of selections that analysts face every day: a dashboard quantity that appears wonderful however is definitely deceptive, or an experiment end result that appears actionable however accommodates a structural flaw. The interviewer already is aware of the reply. What they’re watching is your thought course of, together with whether or not you ask the precise questions, discover lacking data, and push again on a quantity that appears good at first sight. Candidates stumble over these traps repeatedly, even these with sturdy mathematical backgrounds.

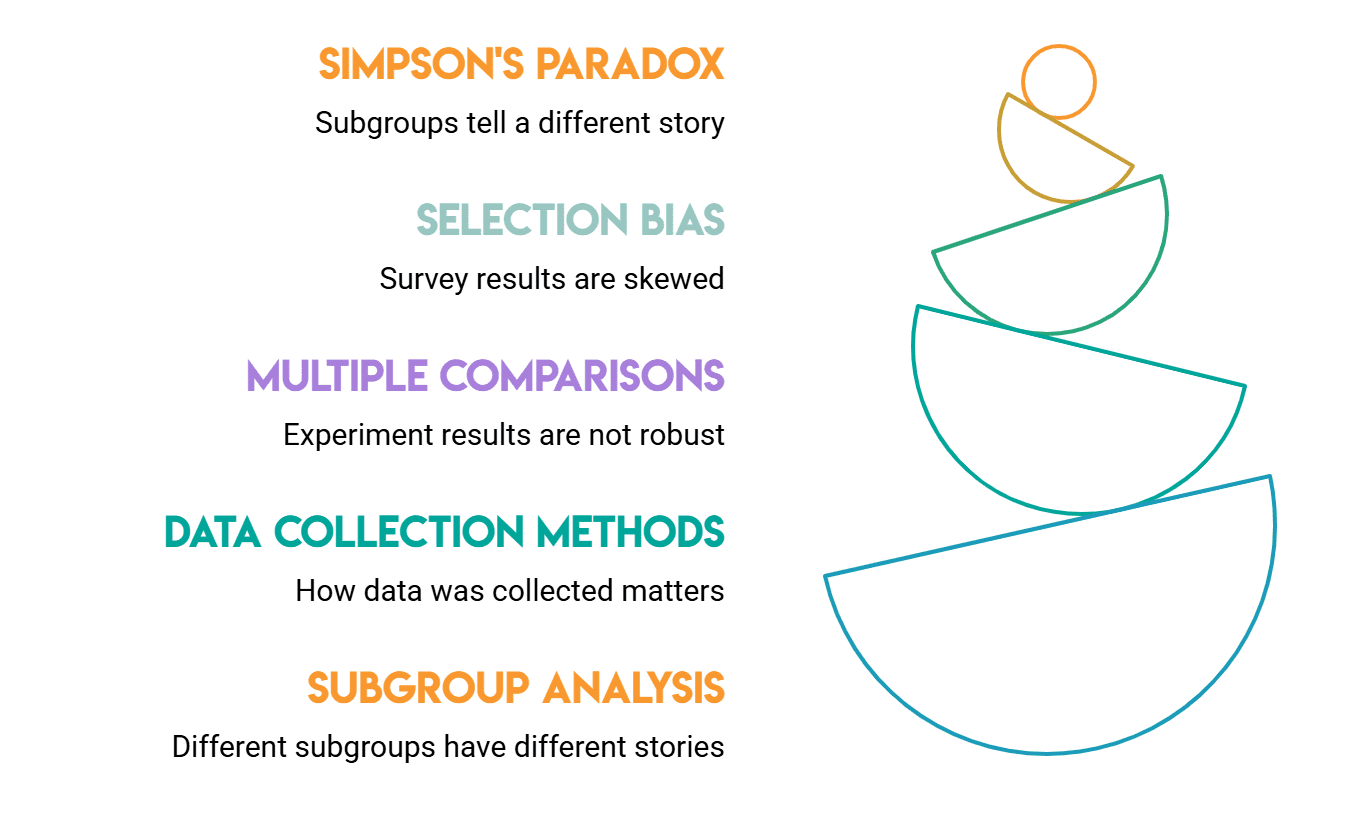

We’ll look at 5 of the most typical traps.

# Understanding Simpson’s Paradox

This entice goals to catch individuals who unquestioningly belief aggregated numbers.

Simpson’s paradox occurs when a development seems in several teams of information however vanishes or reverses when combining these teams. The traditional instance is UC Berkeley’s 1973 admissions knowledge: general admission charges favored males, however when damaged down by division, ladies had equal or higher admission charges. The combination quantity was deceptive as a result of ladies utilized to extra aggressive departments.

The paradox is inevitable at any time when teams have completely different sizes and completely different base charges. Understanding that’s what can separate a surface-level reply from a deep one.

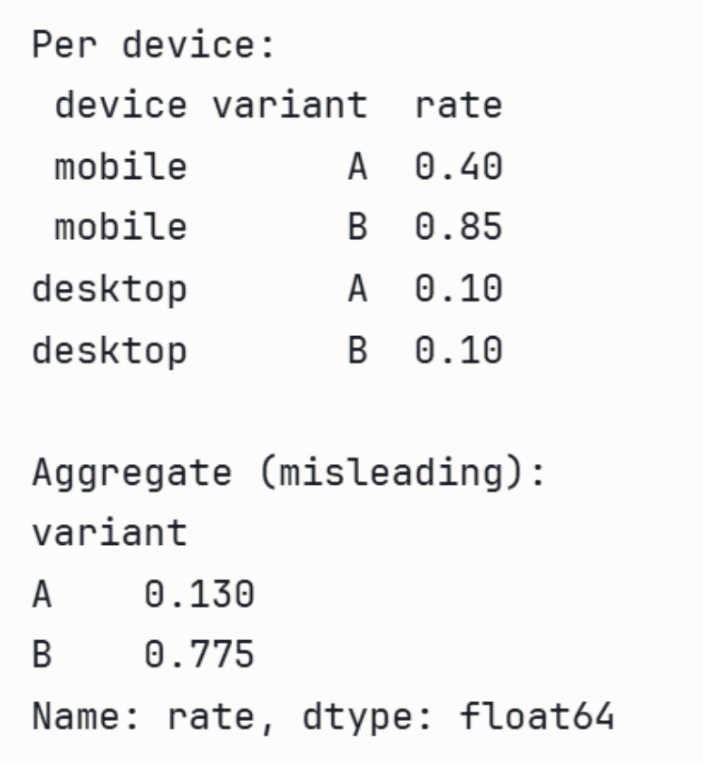

In interviews, a query may appear to be this: “We ran an A/B take a look at. General, variant B had a better conversion fee. Nonetheless, after we break it down by machine kind, variant A carried out higher on each cellular and desktop. What is occurring?” A powerful candidate refers to Simpson’s paradox, clarifies its trigger (group proportions differ between the 2 variants), and asks to see the breakdown somewhat than belief the combination determine.

Interviewers use this to test whether or not you instinctively ask about subgroup distributions. When you simply report the general quantity, you will have misplaced factors.

// Demonstrating With A/B Check Knowledge

Within the following demonstration utilizing Pandas, we will see how the combination fee may be deceptive.

import pandas as pd

# A wins on each units individually, however B wins in combination

# as a result of B will get most site visitors from higher-converting cellular.

knowledge = pd.DataFrame({

'machine': ['mobile', 'mobile', 'desktop', 'desktop'],

'variant': ['A', 'B', 'A', 'B'],

'converts': [40, 765, 90, 10],

'guests': [100, 900, 900, 100],

})

knowledge['rate'] = knowledge['converts'] / knowledge['visitors']

print('Per machine:')

print(knowledge[['device', 'variant', 'rate']].to_string(index=False))

print('nAggregate (deceptive):')

agg = knowledge.groupby('variant')[['converts', 'visitors']].sum()

agg['rate'] = agg['converts'] / agg['visitors']

print(agg['rate'])

Output:

# Figuring out Choice Bias

This take a look at lets interviewers assess whether or not you concentrate on the place knowledge comes from earlier than analyzing it.

Choice bias arises when the information you will have shouldn’t be consultant of the inhabitants you are trying to grasp. As a result of the bias is within the knowledge assortment course of somewhat than within the evaluation, it’s easy to miss.

Contemplate these potential interview framings:

- We analyzed a survey of our customers and located that 80% are glad with the product. Does that inform us our product is nice? A strong candidate would level out that glad customers are extra doubtless to answer surveys. The 80% determine in all probability overstates satisfaction since sad customers more than likely selected to not take part.

- We examined clients who left final quarter and found they primarily had poor engagement scores. Ought to our consideration be on engagement to scale back churn? The issue right here is that you just solely have engagement knowledge for churned customers. You shouldn’t have engagement knowledge for customers who stayed, which makes it inconceivable to know if low engagement truly predicts churn or whether it is only a attribute of churned customers usually.

A associated variant value realizing is survivorship bias: you solely observe the outcomes that made it by way of some filter. When you solely use knowledge from profitable merchandise to research why they succeeded, you might be ignoring people who failed for a similar causes that you’re treating as strengths.

// Simulating Survey Non-Response

We will simulate how non-response bias skews outcomes utilizing NumPy.

import numpy as np

import pandas as pd

np.random.seed(42)

# Simulate customers the place glad customers usually tend to reply

satisfaction = np.random.selection([0, 1], measurement=1000, p=[0.5, 0.5])

# Response chance: 80% for glad, 20% for unhappy

response_prob = np.the place(satisfaction == 1, 0.8, 0.2)

responded = np.random.rand(1000) < response_prob

print(f"True satisfaction fee: {satisfaction.imply():.2%}")

print(f"Survey satisfaction fee: {satisfaction[responded].imply():.2%}")

Output:

Interviewers use choice bias inquiries to see in case you separate “what the information exhibits” from “what’s true about customers.”

# Stopping p-Hacking

p-hacking (additionally referred to as knowledge dredging) occurs whenever you run many assessments and solely report those with ( p < 0.05 ).

The problem is that ( p )-values are solely supposed for particular person assessments. One false optimistic can be anticipated by probability alone if 20 assessments have been run at a 5% significance degree. The false discovery fee is elevated by fishing for a big end result.

An interviewer may ask you the next: “Final quarter, we performed fifteen characteristic experiments. At ( p < 0.05 ), three have been discovered to be vital. Do all three should be shipped?” A weak reply says sure.

A powerful reply would firstly ask what the hypotheses have been earlier than the assessments have been run, if the importance threshold was set prematurely, and whether or not the workforce corrected for a number of comparisons.

The follow-up typically includes how you’ll design experiments to keep away from this. Pre-registering hypotheses earlier than knowledge assortment is essentially the most direct repair, because it removes the choice to resolve after the actual fact which assessments have been “actual.”

// Watching False Positives Accumulate

We will observe how false positives happen by probability utilizing SciPy.

import numpy as np

from scipy import stats

np.random.seed(0)

# 20 A/B assessments the place the null speculation is TRUE (no actual impact)

n_tests, alpha = 20, 0.05

false_positives = 0

for _ in vary(n_tests):

a = np.random.regular(0, 1, 1000)

b = np.random.regular(0, 1, 1000) # an identical distribution!

if stats.ttest_ind(a, b).pvalue < alpha:

false_positives += 1

print(f'Exams run: {n_tests}')

print(f'False positives (p<0.05): {false_positives}')

print(f'Anticipated by probability alone: {n_tests * alpha:.0f}')

Output:

Even with zero actual impact, ~1 in 20 assessments clears ( p < 0.05 ) by probability. If a workforce runs 15 experiments and experiences solely the numerous ones, these outcomes are more than likely noise.

It’s equally essential to deal with exploratory evaluation as a type of speculation era somewhat than affirmation. Earlier than anybody takes motion primarily based on an exploration end result, a confirmatory experiment is required.

# Managing A number of Testing

This take a look at is carefully associated to p-hacking, however it’s value understanding by itself.

The a number of testing downside is the formal statistical situation: whenever you run many speculation assessments concurrently, the chance of a minimum of one false optimistic grows shortly. Even when the remedy has no impact, you must anticipate roughly 5 false positives in case you take a look at 100 metrics in an A/B take a look at and declare something with ( p < 0.05 ) as vital.

The corrections for this are well-known: Bonferroni correction (divide alpha by the variety of assessments) and Benjamini-Hochberg (controls the false discovery fee somewhat than the family-wise error fee).

Bonferroni is a conservative method: for instance, in case you take a look at 50 metrics, your per-test threshold drops to 0.001, making it tougher to detect actual results. Benjamini-Hochberg is extra acceptable if you find yourself prepared to just accept some false discoveries in trade for extra statistical energy.

In interviews, this comes up when discussing how an organization tracks experiment metrics. A query may be: “We monitor 50 metrics per experiment. How do you resolve which of them matter?” A strong response discusses pre-specifying main metrics previous to the experiment’s execution and treating secondary metrics as exploratory whereas acknowledging the difficulty of a number of testing.

Interviewers are looking for out in case you are conscious that taking extra assessments ends in extra noise somewhat than extra data.

# Addressing Confounding Variables

This entice catches candidates who deal with correlation as causation with out asking what else may clarify the connection.

A confounding variable is one which influences each the impartial and dependent variables, creating the phantasm of a direct relationship the place none exists.

The traditional instance: ice cream gross sales and drowning charges are correlated, however the confounder is summer time warmth; each go up in heat months. Performing on that correlation with out accounting for the confounder results in unhealthy choices.

Confounding is especially harmful in observational knowledge. Not like a randomized experiment, observational knowledge doesn’t distribute potential confounders evenly between teams, so variations you see may not be attributable to the variable you might be finding out in any respect.

A typical interview framing is: “We seen that customers who use our cellular app extra are inclined to have considerably larger income. Ought to we push notifications to extend app opens?” A weak candidate says sure. A powerful one asks what sort of consumer opens the app continuously to start with: doubtless essentially the most engaged, highest-value customers.

Engagement drives each app opens and spending. The app opens aren’t inflicting income; they’re a symptom of the identical underlying consumer high quality.

Interviewers use confounding to check whether or not you distinguish correlation from causation earlier than drawing conclusions, and whether or not you’ll push for randomized experimentation or propensity rating matching earlier than recommending motion.

// Simulating A Confounded Relationship

import numpy as np

import pandas as pd

np.random.seed(42)

n = 1000

# Confounder: consumer high quality (0 = low, 1 = excessive)

user_quality = np.random.binomial(1, 0.5, n)

# App opens pushed by consumer high quality, not impartial

app_opens = user_quality * 5 + np.random.regular(0, 1, n)

# Income additionally pushed by consumer high quality, not app opens

income = user_quality * 100 + np.random.regular(0, 10, n)

df = pd.DataFrame({

'user_quality': user_quality,

'app_opens': app_opens,

'income': income

})

# Naive correlation appears sturdy — deceptive

naive_corr = df['app_opens'].corr(df['revenue'])

# Inside-group correlation (controlling for confounder) is close to zero

corr_low = df[df['user_quality']==0]['app_opens'].corr(df[df['user_quality']==0]['revenue'])

corr_high = df[df['user_quality']==1]['app_opens'].corr(df[df['user_quality']==1]['revenue'])

print(f"Naive correlation (app opens vs income): {naive_corr:.2f}")

print(f"Correlation controlling for consumer high quality:")

print(f" Low-quality customers: {corr_low:.2f}")

print(f" Excessive-quality customers: {corr_high:.2f}")

Output:

Naive correlation (app opens vs income): 0.91

Correlation controlling for consumer high quality:

Low-quality customers: 0.03

Excessive-quality customers: -0.07

The naive quantity appears like a powerful sign. When you management for the confounder, it disappears totally. Interviewers who see a candidate run this type of stratified test (somewhat than accepting the combination correlation) know they’re speaking to somebody who is not going to ship a damaged advice.

# Wrapping Up

All 5 of those traps have one thing in frequent: they require you to decelerate and query the information earlier than accepting what the numbers appear to point out at first look. Interviewers use these situations particularly as a result of your first intuition is usually mistaken, and the depth of your reply after that first intuition is what separates a candidate who can work independently from one who wants path on each evaluation.

None of those concepts are obscure, and interviewers inquire about them as a result of they’re typical failure modes in actual knowledge work. The candidate who acknowledges Simpson’s paradox in a product metric, catches a variety bias in a survey, or questions whether or not an experiment end result survived a number of comparisons is the one who will ship fewer unhealthy choices.

When you go into FAANG interviews with a reflex to ask the next questions, you might be already forward of most candidates:

- How was this knowledge collected?

- Are there subgroups that inform a unique story?

- What number of assessments contributed to this end result?

Past serving to in interviews, these habits may also forestall unhealthy choices from reaching manufacturing.

Nate Rosidi is a knowledge scientist and in product technique. He is additionally an adjunct professor instructing analytics, and is the founding father of StrataScratch, a platform serving to knowledge scientists put together for his or her interviews with actual interview questions from prime corporations. Nate writes on the most recent tendencies within the profession market, offers interview recommendation, shares knowledge science tasks, and covers every little thing SQL.