What’s catastrophic forgetting in basis fashions?

Basis fashions excel in various domains however are largely static as soon as deployed. Tremendous-tuning on new duties usually introduces catastrophic forgetting—the lack of beforehand realized capabilities. This limitation poses a barrier for constructing long-lived, frequently enhancing AI brokers.

Why does on-line reinforcement studying overlook lower than supervised fine-tuning?

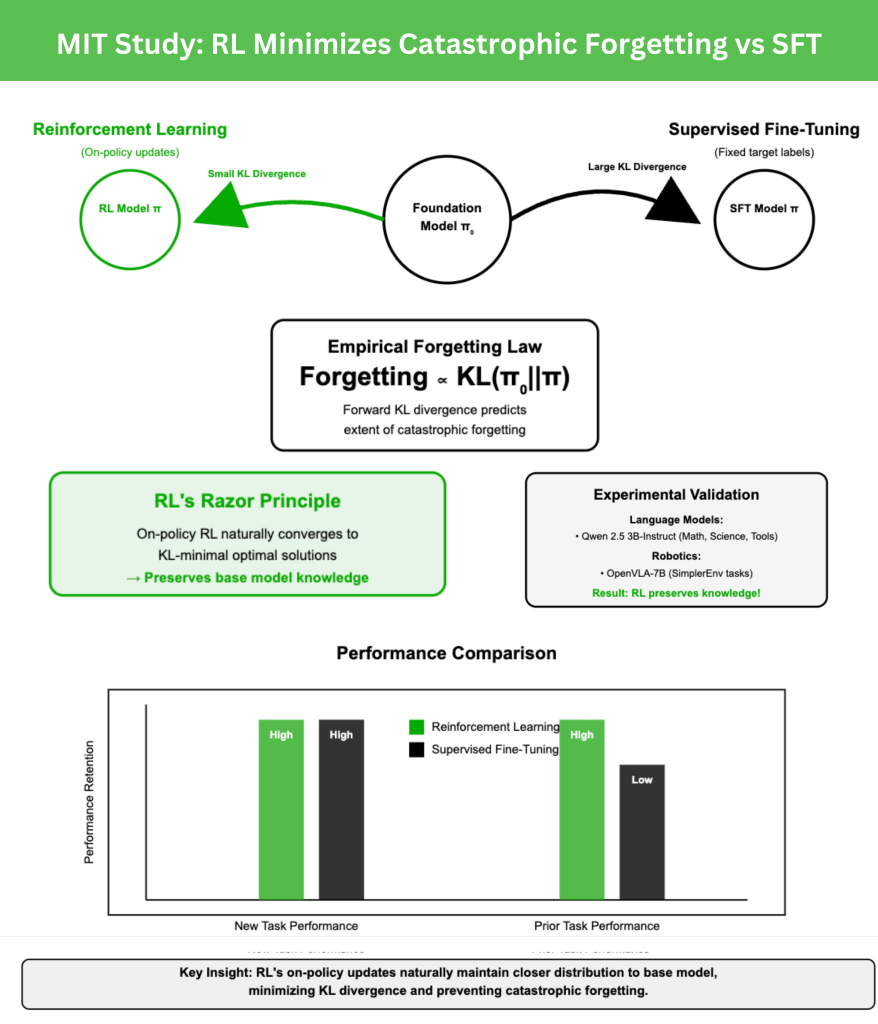

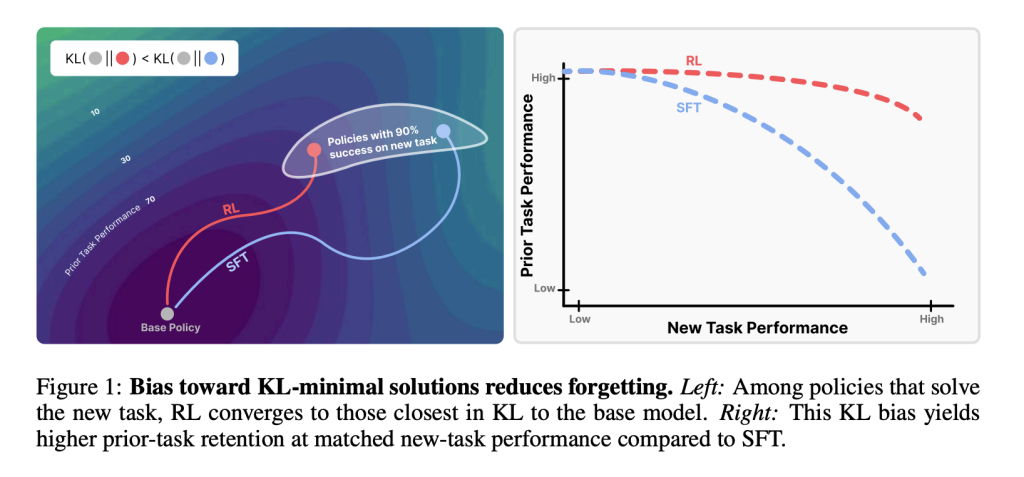

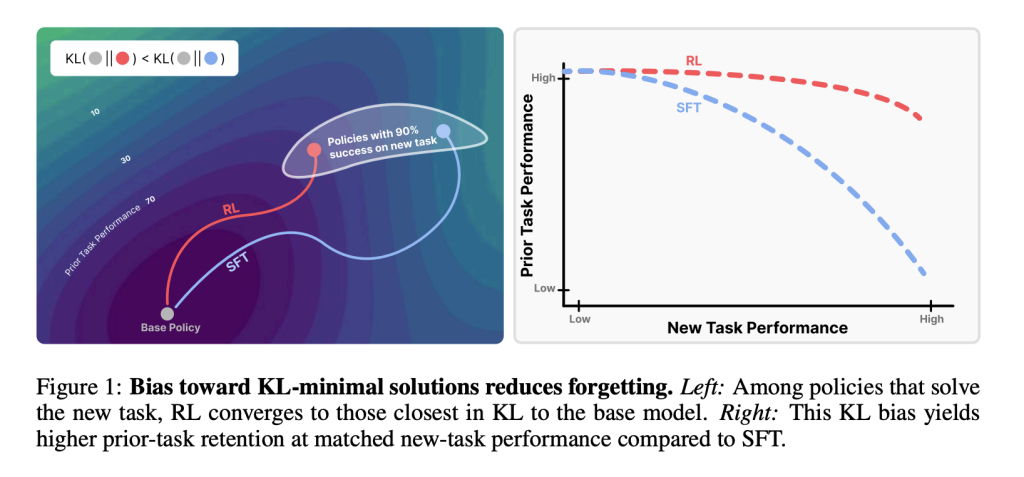

A brand new MIT examine compares reinforcement studying (RL) and supervised fine-tuning (SFT). Each can obtain excessive efficiency on new duties, however SFT tends to overwrite prior talents. RL, against this, preserves them. The important thing lies in how every technique shifts the mannequin’s output distribution relative to the bottom coverage.

How can forgetting be measured?

The analysis crew proposes an empirical forgetting regulation:

Forgetting∝KL(π0∣∣π)

the place π0 is the bottom mannequin and π is the fine-tuned mannequin. The ahead KL divergence, measured on the brand new activity, strongly predicts the extent of forgetting. This makes forgetting quantifiable without having information from prior duties.

What do experiments on giant language fashions reveal?

Utilizing Qwen 2.5 3B-Instruct as the bottom mannequin, fine-tuning was carried out on:

- Math reasoning (Open-Reasoner-Zero),

- Science Q&A (SciKnowEval subset),

- Software use (ToolAlpaca).

Efficiency was evaluated on prior benchmarks corresponding to HellaSwag, MMLU, TruthfulQA, and HumanEval. Outcomes confirmed that RL improved new-task accuracy whereas maintaining prior-task accuracy steady, whereas SFT constantly sacrificed prior information.

How does RL evaluate to SFT in robotics duties?

In robotic management experiments with OpenVLA-7B fine-tuned in SimplerEnv pick-and-place situations, RL adaptation maintained basic manipulation abilities throughout duties. SFT, whereas profitable on the brand new activity, degraded prior manipulation talents—once more illustrating RL’s conservatism in preserving information.

What insights come from the ParityMNIST examine?

To isolate mechanisms, the analysis crew launched a toy downside, ParityMNIST. Right here, RL and SFT each reached excessive new-task accuracy, however SFT induced sharper declines on the FashionMNIST auxiliary benchmark. Crucially, plotting forgetting towards KL divergence revealed a single predictive curve, validating KL because the governing issue.

Why do on-policy updates matter?

On-policy RL samples from the mannequin’s personal outputs, incrementally reweighting them by reward. This course of constrains studying to distributions already near the bottom mannequin. SFT, in distinction, optimizes towards mounted labels that could be arbitrarily distant. Theoretical evaluation exhibits coverage gradients converge to KL-minimal optimum options, formalizing RL’s benefit.

Are different explanations adequate?

The analysis crew examined alternate options: weight-space modifications, hidden illustration drift, sparsity of updates, and various distributional metrics (reverse KL, whole variation, L2 distance). None matched the predictive power of ahead KL divergence, reinforcing that distributional closeness is the important issue.

What are the broader implications?

- Analysis: Put up-training ought to think about KL-conservatism, not simply activity accuracy.

- Hybrid strategies: Combining SFT effectivity with express KL minimization may yield optimum trade-offs.

- Continuous studying: RL’s Razor gives a measurable criterion for designing adaptive brokers that be taught new abilities with out erasing outdated ones.

Conclusion

The MIT analysis reframes catastrophic forgetting as a distributional downside ruled by ahead KL divergence. Reinforcement studying forgets much less as a result of its on-policy updates naturally bias towards KL-minimal options. This precept—RL’s Razor—gives each an evidence for RL’s robustness and a roadmap for growing post-training strategies that assist lifelong studying in basis fashions.

Key Takeaways

- Reinforcement studying (RL) preserves prior information higher than Supervised fine-tuning (SFT): Even when each obtain the identical accuracy on new duties, RL retains prior capabilities whereas SFT erases them.

- Forgetting is predictable by KL divergence: The diploma of catastrophic forgetting is strongly correlated with the ahead KL divergence between the fine-tuned and base coverage, measured on the brand new activity.

- RL’s Razor precept: On-policy RL converges to KL-minimal options, making certain updates stay near the bottom mannequin and decreasing forgetting.

- Empirical validation throughout domains: Experiments on LLMs (math, science Q&A, instrument use) and robotics duties verify RL’s robustness towards forgetting, whereas SFT constantly trades outdated information for new-task efficiency.

- Managed experiments verify generality: Within the ParityMNIST toy setting, each RL and SFT confirmed forgetting aligned with KL divergence, proving the precept holds past large-scale fashions.

- Future design axis for post-training: Algorithms must be evaluated not solely by new-task accuracy but additionally by how conservatively they shift distributions in KL area, opening avenues for hybrid RL–SFT strategies.

Take a look at the PAPER and PROJECT PAGE. Be happy to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be happy to comply with us on Twitter and don’t overlook to affix our 100k+ ML SubReddit and Subscribe to our Publication.