Picture by Creator

# Introduction

For those who’re constructing functions with massive language fashions (LLMs), you’ve got most likely skilled this state of affairs the place you alter a immediate, run it a number of occasions, and the output feels higher. However is it truly higher? With out goal metrics, you might be caught in what the trade now calls “vibe testing,” which implies making choices primarily based on instinct fairly than information.

The problem comes from a basic attribute of AI fashions: uncertainty. Not like conventional software program, the place the identical enter at all times produces the identical output, LLMs can generate totally different responses to comparable prompts. This makes standard unit testing ineffective and leaves builders guessing whether or not their adjustments really improved efficiency.

Then got here Google Stax, a brand new experimental toolkit from Google DeepMind and Google Labs designed to deliver accuracy to AI analysis. On this article, we check out how Stax allows builders and information scientists to check fashions and prompts towards their very own customized standards, changing subjective judgments with repeatable, data-driven choices.

# Understanding Google Stax

Stax is a developer software that simplifies the analysis of generative AI fashions and functions. Consider it as a testing framework particularly constructed for the distinctive challenges of working with LLMs.

At its core, Stax solves a easy however important drawback: how are you aware if one mannequin or immediate is best than one other in your particular use case? Quite than counting on common standards that won’t mirror your utility’s wants, Stax allows you to outline what “good” means in your venture and measure towards these requirements.

// Exploring Key Capabilities

- It helps outline your individual success standards past generic metrics like fluency and security

- You possibly can take a look at totally different prompts throughout varied fashions side-by-side

- You can also make data-driven choices by visualizing gathered efficiency metrics, together with high quality, latency, and token utilization

- It could run assessments at scale utilizing your individual datasets

Stax is versatile, supporting not solely Google’s Gemini fashions but in addition OpenAI’s GPT, Anthropic’s Claude, Mistral, and others by means of API integrations.

# Shifting Past Normal Benchmarks

Common AI benchmarks serve an necessary goal, like serving to observe mannequin progress at a excessive degree. Nevertheless, they typically fail to mirror domain-specific necessities. A mannequin that excels at open-domain reasoning would possibly carry out poorly on specialised duties like:

- Compliance-focused summarization

- Authorized doc evaluation

- Enterprise-specific Q&A

- Model-voice adherence

The hole between common benchmarks and real-world functions is the place Stax offers worth. It lets you consider AI programs primarily based in your information and your standards, not summary world scores.

# Getting Began With Stax

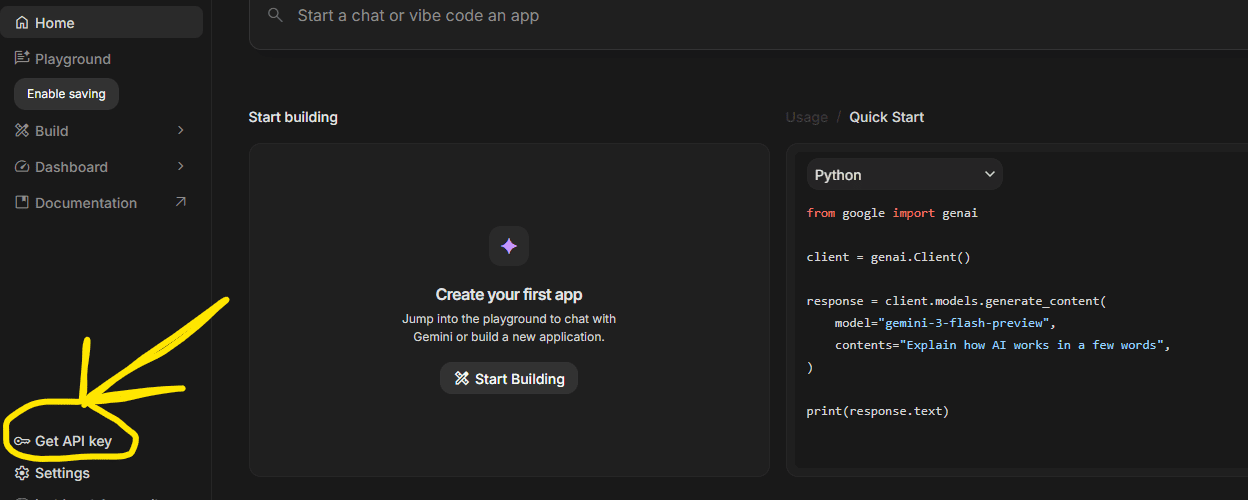

// Step 1: Including An API Key

To generate mannequin outputs and run evaluations, you may want so as to add an API key. Stax recommends beginning with a Gemini API key, because the built-in evaluators use it by default, although you’ll be able to configure them to make use of different fashions. You possibly can add your first key throughout onboarding or later in Settings.

For evaluating a number of suppliers, add keys for every mannequin you need to take a look at; this allows parallel comparability with out switching instruments.

Getting an API key

// Step 2: Creating An Analysis Venture

Tasks are the central workspace in Stax. Every venture corresponds to a single analysis experiment, for instance, testing a brand new system immediate or evaluating two fashions.

You will select between two venture varieties:

| Venture Kind | Finest For |

|---|---|

| Single Mannequin | Baselining efficiency or testing an iteration of a mannequin or system immediate |

| Aspect-by-Aspect | Immediately evaluating two totally different fashions or prompts head-to-head on the identical dataset |

Determine 1: A side-by-side comparability flowchart exhibiting two fashions receiving the identical enter prompts and their outputs flowing into an evaluator that produces comparability metrics

// Step 3: Constructing Your Dataset

A strong analysis begins with information that’s correct and displays your real-world use circumstances. Stax affords two main strategies to realize this:

Possibility A: Including Information Manually within the Immediate Playground

If you do not have an current dataset, construct one from scratch:

- Choose the mannequin(s) you need to take a look at

- Set a system immediate (elective) to outline the AI’s function

- Add person prompts that symbolize actual person inputs

- Present human rankings (elective) to create baseline high quality scores

Every enter, output, and score mechanically saves as a take a look at case.

Possibility B: Importing an Current Dataset

For groups with manufacturing information, add CSV recordsdata straight. In case your dataset would not embrace mannequin outputs, click on “Generate Outputs” and choose a mannequin to generate them.

Finest follow: Embrace the sting circumstances and conflicting examples in your dataset to make sure complete testing.

# Evaluating AI Outputs

// Conducting Guide Analysis

You possibly can present human rankings on particular person outputs straight within the playground or on the venture benchmark. Whereas human analysis is taken into account the “gold commonplace,” it is sluggish, costly, and tough to scale.

// Performing Automated Analysis With Autoraters

To attain many outputs directly, Stax makes use of LLM-as-judge analysis, the place a robust AI mannequin assesses one other mannequin’s outputs primarily based in your standards.

Stax contains preloaded evaluators for widespread metrics:

- Fluency

- Factual consistency

- Security

- Instruction following

- Conciseness

The Stax analysis interface exhibiting a column of mannequin outputs with adjoining rating columns from varied evaluators, plus a “Run Analysis” button

// Leveraging Customized Evaluators

Whereas preloaded evaluators present a superb start line, constructing customized evaluators is one of the best ways to measure what issues in your particular use case.

Customized evaluators allow you to outline particular standards like:

- “Is the response useful however not overly acquainted?”

- “Does the output include any personally identifiable data (PII)?”

- “Does the generated code observe our inner fashion information?”

- “Is the model voice in line with our pointers?”

To construct a customized evaluator: Outline your clear standards, write a immediate for the decide mannequin that features a scoring guidelines, and take a look at it towards a small pattern of manually rated outputs to make sure alignment.

# Exploring Sensible Use Instances

// Reviewing Use Case 1: Buyer Assist Chatbot

Think about that you’re constructing a buyer assist chatbot. Your necessities would possibly embrace the next:

- Skilled tone

- Correct solutions primarily based in your information base

- No hallucinations

- Decision of widespread points inside three exchanges

With Stax, you’d:

- Add a dataset of actual buyer queries

- Generate responses from totally different fashions (or totally different immediate variations)

- Create a customized evaluator that scores for professionalism and accuracy

- Examine outcomes side-by-side to pick out one of the best performer

// Reviewing Use Case 2: Content material Summarization Software

For a information summarization utility, you care about:

- Conciseness (summaries underneath 100 phrases)

- Factual consistency with the unique article

- Preservation of key data

Utilizing Stax’s pre-built Summarization High quality evaluator provides you fast metrics, whereas customized evaluators can implement particular size constraints or model voice necessities.

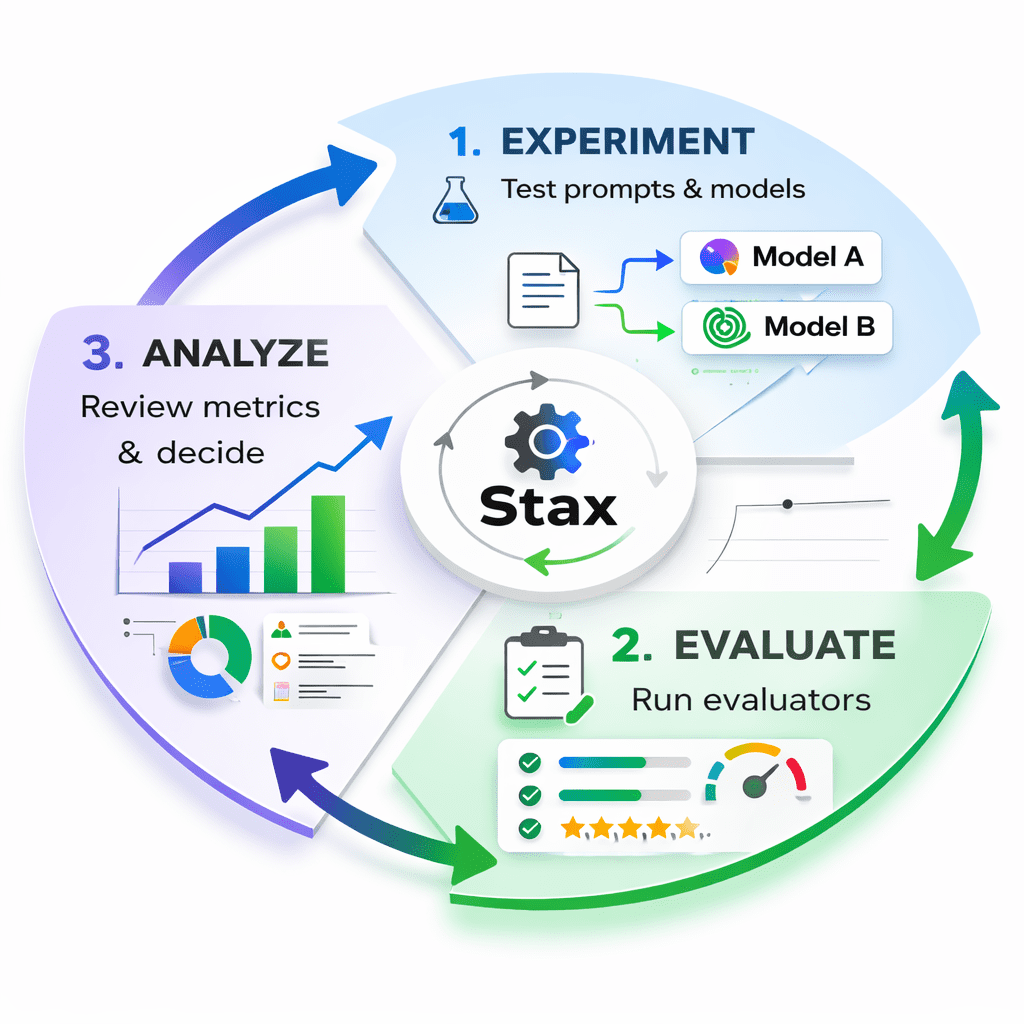

Determine 2: A visible of the Stax Flywheel exhibiting three phases: Experiment (take a look at prompts/fashions), Consider (run evaluators), and Analyze (assessment metrics and resolve)

# Deciphering Outcomes

As soon as evaluations are full, Stax provides new columns to your dataset exhibiting scores and rationales for each output. The Venture Metrics part offers an aggregated view of:

- Human rankings

- Common evaluator scores

- Inference latency

- Token counts

Use this quantitative information to:

- Examine iterations: Does Immediate A persistently outperform Immediate B?

- Select between fashions: Is the sooner mannequin definitely worth the slight drop in high quality?

- Monitor progress: Are your optimizations truly bettering efficiency?

- Determine failures: Which inputs persistently produce poor outputs?

Determine 3: A dashboard view exhibiting bar charts evaluating two fashions throughout a number of metrics (high quality rating, latency, price)

# Implementing Finest Practices For Efficient Evaluations

- Begin Small, Then Scale: You do not want a whole lot of take a look at circumstances to get worth. An analysis set with simply ten high-quality prompts is endlessly extra helpful than counting on vibe testing alone. Begin with a targeted set and broaden as you be taught.

- Create Regression Assessments: Your evaluations ought to embrace assessments that defend current high quality. For instance, “at all times output legitimate JSON” or “by no means embrace competitor names.” These stop new adjustments from breaking what already works.

- Construct Problem Units: Create datasets focusing on areas the place you need your AI to enhance. In case your mannequin struggles with advanced reasoning, construct a problem set particularly for that functionality.

- Do not Abandon Human Evaluate: Whereas automated analysis scales properly, having your crew use your AI product stays essential for constructing instinct. Use Stax to seize compelling examples from human testing and incorporate them into your formal analysis datasets.

# Answering Steadily Requested Questions

- What’s Google STAX? Stax is a developer software from Google for evaluating LLM-powered functions. It helps you take a look at fashions and prompts towards your individual standards fairly than counting on common benchmarks.

- How does Stax AI work? Stax makes use of an “LLM-as-judge” method the place you outline analysis standards, and an AI mannequin scores outputs primarily based on these standards. You need to use pre-built evaluators or create customized ones.

- Which software from Google permits people to make their machine studying fashions? Whereas Stax focuses on analysis fairly than mannequin creation, it really works alongside different Google AI instruments. For constructing and coaching fashions, you’d sometimes use TensorFlow or Vertex AI. Stax then helps you consider these fashions’ efficiency.

- What’s Google’s equal of ChatGPT? Google’s main conversational AI is Gemini (previously Bard). Stax might help you take a look at and optimize prompts for Gemini and examine its efficiency towards different fashions.

- Can I prepare AI alone information? Stax would not prepare fashions; it evaluates them. Nevertheless, you need to use your individual information as take a look at circumstances to guage pre-trained fashions. For coaching customized fashions in your information, you’d use instruments like Vertex AI.

# Conclusion

The period of vibe testing is ending. As AI strikes from experimental demos to manufacturing programs, detailed analysis turns into necessary. Google Stax offers the framework to outline what “good” means in your distinctive use case and the instruments to measure it systematically.

By changing subjective judgments with repeatable, data-driven evaluations, Stax helps you:

- Ship AI options with confidence

- Make knowledgeable choices about mannequin choice

- Iterate sooner on prompts and system directions

- Construct AI merchandise that reliably meet person wants

Whether or not you are a newbie information scientist or an skilled ML engineer, adopting structured analysis practices will remodel the way you construct with AI. Begin small, outline what issues in your utility, and let information information your choices.

Prepared to maneuver past vibe testing? Go to stax.withgoogle.com to discover the software and be part of the group of builders constructing higher AI functions.

// References

Shittu Olumide is a software program engineer and technical author captivated with leveraging cutting-edge applied sciences to craft compelling narratives, with a eager eye for element and a knack for simplifying advanced ideas. It’s also possible to discover Shittu on Twitter.