Picture by Writer

# Introduction

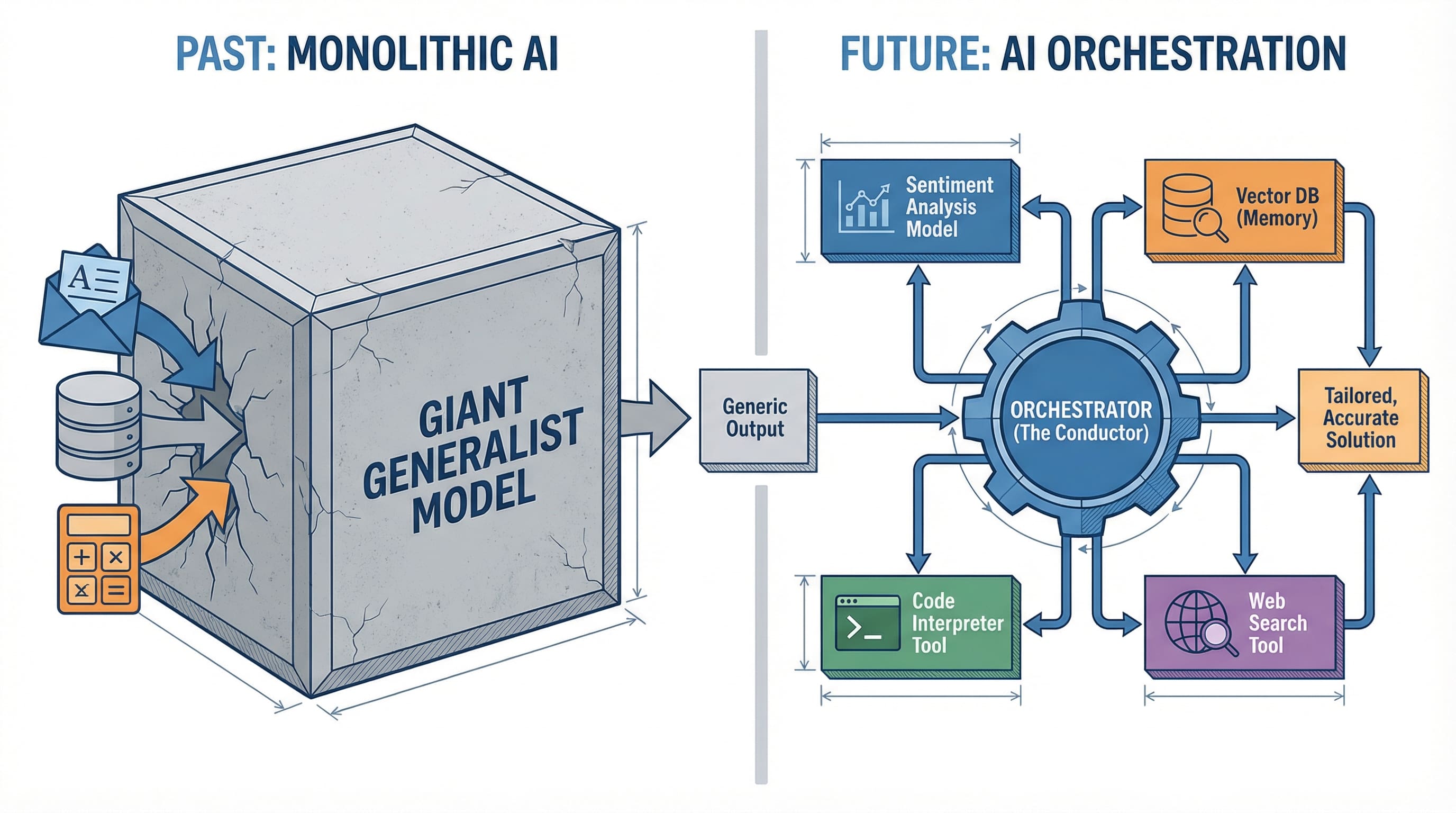

For the previous two years, the AI trade has been locked in a race to construct ever-larger language fashions. GPT-4, Claude, Gemini: every promising to be the singular resolution to each AI downside. However whereas firms competed to create the most important mind, a quiet revolution was taking place in manufacturing environments. Builders stopped asking “which mannequin is finest?” and began asking “how do I make a number of fashions work collectively?”

This shift marks the rise of AI orchestration, and it is altering how we construct clever functions.

# Why One AI Cannot Rule Them All

The dream of a single, omnipotent AI mannequin is interesting. One API name, one response, one invoice. However actuality has confirmed extra advanced.

Think about a customer support utility. You want sentiment evaluation to gauge buyer emotion, information retrieval to seek out related data, response technology to craft replies, and high quality checking to make sure accuracy. Whereas GPT-4 can technically deal with all these duties, every requires totally different optimization. A mannequin educated to excel at sentiment evaluation makes totally different architectural tradeoffs than one optimized for textual content technology.

The breakthrough is not in constructing one mannequin to rule all of them. It is in coordinating a number of specialists.

This mirrors a sample we have seen earlier than in software program structure. Microservices changed monolithic functions not as a result of any single microservice was superior, however as a result of coordinated specialised companies proved extra maintainable, scalable, and efficient. AI is having its microservices second.

# The Three-Layer Stack

Understanding trendy AI functions requires considering in layers. The structure that is emerged from manufacturing deployments seems to be remarkably constant.

// The Mannequin Layer

The Mannequin Layer sits on the basis. This contains your LLMs, whether or not GPT-4, Claude, native fashions like Llama, or specialised fashions for imaginative and prescient, code, or evaluation. Every mannequin brings particular capabilities: reasoning, technology, classification, or transformation. The important thing perception is that you simply’re now not selecting one mannequin. You are composing a group.

// The Instrument Layer

The Instrument Layer allows motion. Language fashions can assume however cannot do something on their very own. They want instruments to work together with the world. This layer contains internet search, database queries, API calls, code execution environments, and file programs. When Claude “searches the net” or ChatGPT “runs Python code,” they’re utilizing instruments from this layer. The Mannequin Context Protocol (MCP), lately launched by Anthropic, is standardizing how fashions hook up with instruments, making this layer more and more plug-and-play.

// The Orchestration Layer

The Orchestration Layer coordinates all the pieces. That is the place the intelligence of your system really lives. The orchestrator decides which mannequin to invoke for which job, when to name instruments, how one can chain operations collectively, and how one can deal with failures. It is the conductor of your AI symphony.

Fashions are musicians, instruments are devices, and orchestration is the sheet music that tells everybody when to play.

# Orchestration Frameworks: Understanding the Patterns

Simply as React and Vue standardized frontend growth, orchestration frameworks are standardizing how we construct AI programs. However earlier than we focus on particular instruments, we have to perceive the architectural patterns they symbolize. Instruments come and go. Patterns endure.

// The Chain Sample (Sequential Logic)

The Chain Sample (Sequential Logic) is orchestration’s most elementary sample. Consider it as a knowledge pipeline the place every step’s output turns into the following step’s enter. Consumer query, retrieve context, generate response, validate output. Every operation occurs in sequence, with the orchestrator managing the handoffs. LangChain pioneered this sample and constructed a whole framework round making chains composable and reusable.

The power of chains lies of their simplicity: you may cause concerning the move, debug step-by-step, and optimize particular person levels. The limitation is rigidity. Chains do not adapt based mostly on intermediate outcomes. If step two discovers the query is unanswerable, the chain nonetheless marches via steps three and 4. However for predictable workflows with clear levels, chains work effectively.

// The RAG Sample (Retrieval-First Logic)

The RAG Sample (Retrieval-First Logic) emerged from a particular downside: language fashions hallucinate after they lack data. The answer is straightforward: retrieve related data first, then generate responses grounded in that knowledge.

However architecturally, RAG represents one thing deeper: Simply-in-Time Context Injection. Consider it because the separation of Compute (the LLM) from Reminiscence (the Vector Retailer). The mannequin itself stays static. It does not study new info. As a substitute, you swap what’s within the mannequin’s “RAM” by injecting related context into its immediate window. You are not retraining the mind. You are giving it entry to the precise data it wants, exactly when it wants it.

This architectural precept (Question, Search information base, Rank outcomes by relevance, Inject into context, Generate response) works as a result of it turns a generative downside right into a retrieval plus synthesis downside, and retrieval is extra dependable than technology.

What makes this an enduring sample quite than only a method is that this separation of issues. The mannequin handles reasoning and synthesis. The vector retailer handles reminiscence and recall. The orchestrator manages the injection timing. LlamaIndex constructed its total framework round optimizing this sample, dealing with the onerous components of doc chunking, embedding technology, vector storage, and retrieval rating. You possibly can see how RAG works in observe even with easy no-code instruments.

// The Multi-Agent Sample (Delegation Logic)

The Multi-Agent Sample (Delegation Logic) represents orchestration’s most subtle evolution. As a substitute of 1 sequential move or one retrieval step, you create specialised brokers that delegate to one another. A “planner” agent breaks down advanced duties. “Researcher” brokers collect data. “Analyst” brokers course of knowledge. “Author” brokers produce output. “Critic” brokers evaluation high quality.

CrewAI exemplifies this sample, however the idea predates the software. The architectural perception is that advanced intelligence emerges from coordination between specialists, not from one generalist making an attempt to do all the pieces. Every agent has a slender duty, clear success standards, and the power to request assist from different brokers. The orchestrator manages the delegation graph, making certain brokers do not loop infinitely and work progresses towards the objective. If you wish to dive deeper into how brokers work collectively, take a look at key agentic AI ideas.

The selection between patterns is not about which is “finest.” It is about matching sample to downside. Easy, predictable workflows? Use chains. Information-intensive functions? Use RAG. Complicated, multi-step reasoning requiring totally different specializations? Use multi-agent. Manufacturing programs usually mix all three: a multi-agent system the place every agent makes use of RAG internally and communicates via chains.

The Mannequin Context Protocol deserves particular point out because the rising commonplace beneath these patterns. MCP is not a sample itself however a common protocol for a way fashions hook up with instruments and knowledge sources. Launched by Anthropic in late 2024, it is changing into the muse layer that frameworks construct upon, the HTTP of AI orchestration. As MCP adoption grows, we’re shifting towards standardized interfaces the place any sample can use any software, no matter which framework you have chosen.

# From Immediate to Pipeline: The Router Modifications Every little thing

Understanding orchestration conceptually is one factor. Seeing it in manufacturing reveals why it issues and exposes the element that determines success or failure.

Think about a coding assistant that helps builders debug points. A single-model strategy would ship code and error messages to GPT-4 and hope for one of the best. An orchestrated system works otherwise, and its success hinges on one essential element: the Router.

The Router is the decision-making engine on the coronary heart of each orchestrated system. It examines incoming requests and determines which pathway via your system they need to take. This is not simply plumbing. Routing accuracy determines whether or not your orchestrated system outperforms a single mannequin or wastes money and time on pointless complexity.

Let’s return to our debugging assistant. When a developer submits an issue, the Router should resolve: Is that this a syntax error? A runtime error? A logic error? Every kind requires totally different dealing with.

How an Clever Router acts as a call engine to direct inputs to specialised pathways | Picture by Writer

Syntax errors path to a specialised code analyzer, a light-weight mannequin fine-tuned for parsing violations. Runtime errors set off the debugger software to look at program state, then cross findings to a reasoning mannequin that understands execution context. Logic errors require a distinct path solely: search Stack Overflow for related points, retrieve related context, then invoke a reasoning mannequin to synthesize options.

However how does the Router resolve? Three approaches dominate manufacturing programs.

Semantic routing makes use of embedding similarity. Convert the person’s query right into a vector, examine it to embeddings of instance questions for every route, and ship it down the trail with highest similarity. Quick and efficient for clearly distinct classes. The debugger makes use of this when error sorts are well-defined and examples are plentiful.

Key phrase routing examines specific indicators. If the error message incorporates “SyntaxError,” path to the parser. If it incorporates “NullPointerException,” path to the runtime handler. Easy, quick, and surprisingly strong when you’ve gotten dependable indicators. Many manufacturing programs begin right here earlier than including complexity.

LLM-decision routing makes use of a small, quick mannequin because the Router itself. Ship the request to a specialised classification mannequin that is been educated or prompted to make routing choices. Extra versatile than key phrases, extra dependable than pure semantic similarity, however provides latency and value. GitHub Copilot and related instruments use variations of this strategy.

Here is the perception that issues: The success of your orchestrated system relies upon 90% on Router accuracy, not on the sophistication of your downstream fashions. An ideal GPT-4 response despatched down the mistaken path helps nobody. A good response from a specialised mannequin routed appropriately solves the issue.

This creates an surprising optimization goal. Groups obsess over which LLM to make use of for technology however neglect Router engineering. They need to do the other. A easy Router making appropriate choices beats a posh Router that is ceaselessly mistaken. Manufacturing groups measure routing accuracy religiously. It is the metric that predicts system success.

The Router additionally handles failures and fallbacks. What if semantic routing is not assured? What if the net search returns nothing? Manufacturing Routers implement resolution timber: attempt semantic routing first, fall again to key phrase matching if confidence is low, escalate to LLM-decision routing for edge circumstances, and at all times keep a default path for actually ambiguous inputs.

This explains why orchestrated programs constantly outperform single fashions regardless of added complexity. It isn’t that orchestration magically makes fashions smarter. It is that correct routing ensures specialised fashions solely see issues they’re optimized to unravel. A syntax analyzer solely analyzes syntax. A reasoning mannequin solely causes. Every element operates in its zone of excellence as a result of the Router protected it from issues it will possibly’t deal with.

The structure sample is common: Router on the entrance, specialised processors behind it, orchestrator managing the move. Whether or not you are constructing a customer support bot, a analysis assistant, or a coding software, getting the Router proper determines whether or not your orchestrated system succeeds or turns into an costly, sluggish various to GPT-4.

# When to Orchestrate, When to Hold It Easy

Not each AI utility wants orchestration. A chatbot that solutions FAQs? Single mannequin. A system that classifies help tickets? Single mannequin. Producing product descriptions? Single mannequin.

Orchestration is sensible once you want:

A number of capabilities that no single mannequin handles effectively. Customer support requiring sentiment evaluation, information retrieval, and response technology advantages from orchestration. Easy Q&A does not.

Exterior knowledge or actions. In case your AI wants to go looking databases, name APIs, or execute code, orchestration manages these software interactions higher than making an attempt to immediate a single mannequin to “fake” it will possibly entry knowledge.

Reliability via redundancy. Manufacturing programs usually chain a quick, low cost mannequin for preliminary processing with a succesful, costly mannequin for advanced circumstances. The orchestrator routes based mostly on issue.

Price optimization. Utilizing GPT-4 for all the pieces is dear. Orchestration helps you to route easy duties to cheaper fashions and reserve costly fashions for onerous issues.

The choice framework is easy: begin easy. Use a single mannequin till you hit clear limitations. Add orchestration when the complexity pays for itself in higher outcomes, decrease prices, or new capabilities.

# Remaining Ideas

AI orchestration represents a maturation of the sector. We’re shifting from “which mannequin ought to I exploit?” to “how ought to I architect my AI system?” This mirrors each expertise’s evolution, from monolithic to distributed, from selecting one of the best software to composing the fitting instruments.

The frameworks exist. The patterns are rising. The query now could be whether or not you will construct AI functions the outdated approach (hoping one mannequin can do all the pieces) or the brand new approach: orchestrating specialised fashions and instruments into programs which are better than the sum of their components.

The way forward for AI is not find the proper mannequin. It is in studying to conduct the orchestra.

Vinod Chugani is an AI and knowledge science educator who bridges the hole between rising AI applied sciences and sensible utility for working professionals. His focus areas embody agentic AI, machine studying functions, and automation workflows. By means of his work as a technical mentor and teacher, Vinod has supported knowledge professionals via ability growth and profession transitions. He brings analytical experience from quantitative finance to his hands-on educating strategy. His content material emphasizes actionable methods and frameworks that professionals can apply instantly.